Multimodal Vision-Agent AI

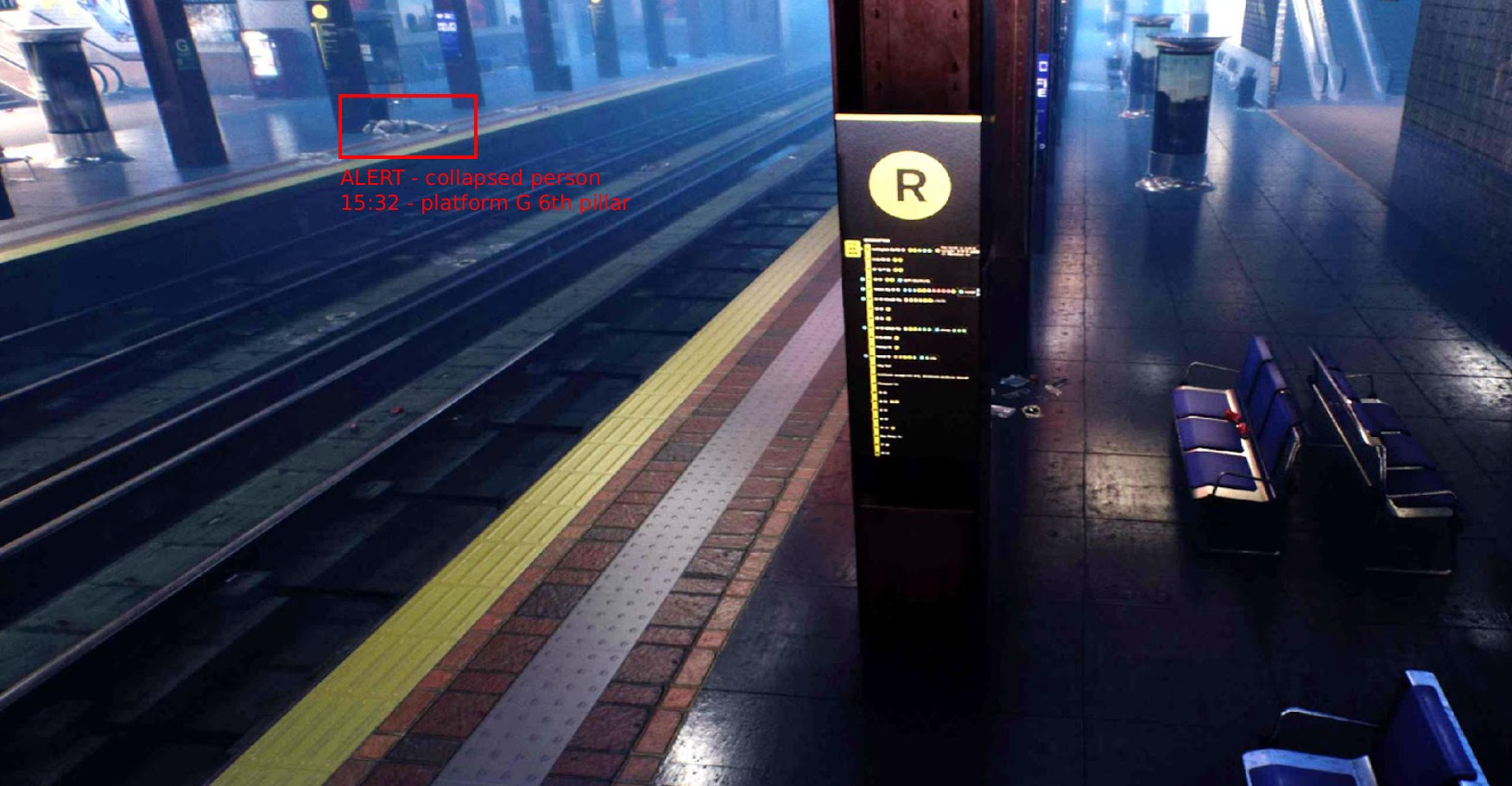

From raw video to actionable operational intelligence. Our Vision AI platform goes beyond classic detection to help organizations decide what to do about what they see.

Why Vision-Agent AI

A classic computer vision system can tell you what was likely seen. A Multimodal Vision-Agent AI platform helps determine what that event means in context.

Beyond detection

Classic computer vision tells you what it sees. Our Vision-Agent AI platform helps you decide what to do about it, combining perception with contextual reasoning and actionable outputs.

Operational intelligence

The system does not just generate more alerts. It verifies candidate events against footage, adds reasoning, and publishes a verdict downstream. Better alerts, not more alerts.

Operational memory

Video becomes searchable and queryable. Teams can find relevant events, retrieve clips, summarize what happened, and generate reports without scrubbing through hours of footage.

Human-in-the-loop by design

The platform reduces noise, adds context, and surfaces high-priority events first. It supports operators rather than overwhelming them, keeping human judgment at the center.

A layered operational pipeline

Real-time intelligence at the front, analytics in the middle, and agent workflows on top for reporting, question answering, and search.

Operational Pipeline

Multimodal Sensing

The platform ingests multiple data streams simultaneously: IP camera feeds via RTSP/ONVIF, microphone arrays, IoT sensor telemetry, and edge device signals. Streams are time-synchronized and normalized into a unified perception frame that downstream layers can reason over.

From perception to operational intelligence

Our Vision AI platform goes beyond classic computer vision. It combines real-time perception with contextual reasoning, risk verification, and agentic workflows, turning raw video into actionable operational intelligence. Instead of just detecting objects, the system understands whether an event matters, how urgent it is, and what should happen next.

Knowledge Connected

Linked to your internal knowledge bases, threat libraries, and procedures

Context Sensitive

Understands situations and context, not only object recognition

Flexible

The same model can be used to detect multiple different situations

Omni-Deployable

Cloud, edge, on-premise, or air-gapped. The model fits anywhere

Verified alerts, evidence, reports, and workflows

Verified alerts, evidence, reports, and workflow actions

Cross-cutting platform services

API, configuration, prompts, and observability

Supports orchestration, monitoring, health, logs, traces, and controlled platform behavior.

Inputs

Multi-stream ingestion from IP cameras, microphones, IoT sensors, and edge devices. Supports RTSP, ONVIF, and custom protocols.

Better alerts, not more alerts

Many environments generate more video and more alerts than any human team can realistically process. In our vision-agent architecture, one layer generates candidate events while another verifies those events against the relevant footage and adds reasoning before publishing a verdict. The goal is to reduce false positives and produce better, more actionable alerts.

Two-Layer Verification

Detection layer proposes, reasoning layer verifies against footage

Contextual Reasoning

Determines if an event is truly dangerous or simply unusual

Natural Language Verdicts

Clear explanations of what happened and why it matters

Evidence-Based

Every alert comes with supporting footage and reasoning chain

Person detected in restricted zone B

Smoke-like pattern detected near conveyor 7

Motion detected in perimeter zone

Person detected in restricted zone B

Camera 14 · Zone B · 02:34 AM

Authorized maintenance worker performing scheduled task. Badge verified, work order confirmed.

From monitoring to searchable operational memory

Modern video AI is no longer only about what happens live. It is also about what can be found later. Our platform turns video into operational memory. Instead of asking a person to scrub through hours of footage, a team can search for a relevant event, retrieve the right clip, summarize what happened, and generate a report with much less manual effort.

Video Search

Natural language search across stored video and event logs

Auto-Summarization

AI-generated summaries of incidents and time periods

Report Generation

Automated reporting with evidence, timeline, and context

Q&A Over Video

Ask questions about past events and get grounded answers

"Show all forklift near-misses in warehouse 3 last week"

Forklift reversed into pedestrian path

Forklift blind-spot incident at intersection

Pedestrian entered forklift active zone

Where the value is most immediate

The opportunity is strongest in environments with many cameras, high review cost, operational risk, and a clear team that owns response.

Rail Safety

Crossing monitoring, platform safety, track intrusion detection, and incident verification across rail infrastructure

Warehouse Operations

Forklift-pedestrian collision prevention, zone monitoring, safety compliance, and near-miss analysis

Industrial Monitoring

Equipment state detection, safety zone enforcement, PPE compliance, and process monitoring

Smart-City Operations

Traffic analysis, public space safety, crowd monitoring, and infrastructure protection

Logistics Hubs

Loading dock safety, vehicle routing, inventory monitoring, and operational efficiency analysis

Critical Infrastructure

Perimeter security, access control, anomaly detection, and multi-sensor surveillance integration

Why better than classic machine learning alone

Classic vision systems remain an important part of the stack. But on their own, they are limited by rules, fixed attributes, and the need for manual interpretation.

Object labels

Contextual reasoning

Raw detections

Verified verdicts

Bounding boxes

Natural-language explanations

No retention

Searchable operational memory

Manual handoff

Agentic workflows

Object labels

Detects objects and assigns fixed labels. No understanding of context, relationships, or intent.

Contextual reasoning

Understands situations: who is there, whether they belong, what they are doing, and whether it matters.