From Detection to Prevention: How Multimodal AI Can Improve Safety and Efficiency

In complex environments such as construction sites, logistics hubs, rail infrastructure, industrial plants, and public spaces, safety depends on fast and reliable awareness. Risks often emerge in seconds: a spark becomes a fire, a pedestrian enters a restricted zone, a worker forgets critical protective equipment, or an unsafe interaction with machinery goes unnoticed.

That is exactly where multimodal AI can create value.

At OneBonsai, we are exploring how object recognition and multimodal reasoning can help organizations detect unsafe situations earlier, respond faster, and operate more efficiently. By combining visual and audio input, AI systems can move beyond simple monitoring and start understanding what is happening in an environment in real time.

This fits naturally with OneBonsai’s broader work in applied innovation, where emerging technologies such as GenAI, and Digital Twins are used to solve concrete operational challenges around safety, retraining, efficiency, and scale.

How does it work?

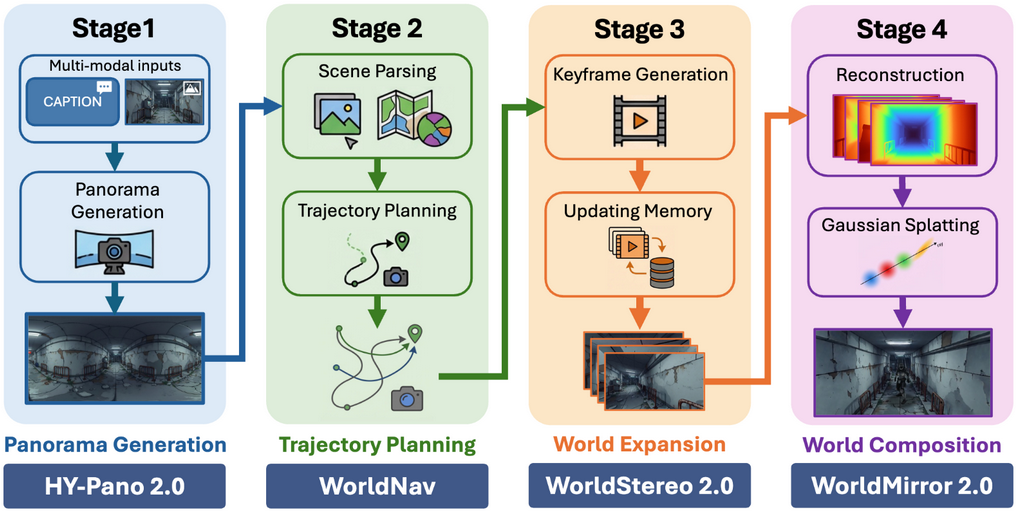

Our model works with diverse semantic inputs, including video and audio streams. By combining these signals, it can reason across modalities and detect patterns of human behavior, environmental change, and physical events.

Instead of analyzing each signal in isolation, the platform interprets them together. A camera may detect smoke, while audio may capture an abnormal sound pattern such as a crack, alarm, or impact. When these signals are combined, the system gains more context and can make faster, more reliable decisions.

This multimodal approach makes it possible to detect anomalies such as:

- early signs of fire or smoke

- missing personal protective equipment

- unsafe movement around vehicles or machines

- prohibited access to dangerous zones

- abnormal events at public crossings or stations

- suspicious behavior related to theft or intrusion

In practical terms, the system turns raw sensory input into actionable intelligence. The output can be a structured text alert, a warning in a dashboard, or a signal that triggers a downstream action such as an alarm, a notification, or an intervention workflow.

Designed for leading enterprises in construction, manufacturing, logistics, defense and more

OneBonsai already positions itself around real operational challenges such as safety, large-scale training, retraining, and efficiency across industries. This new platform extends that same mindset into live operational environments.

1. Smarter industrial safety

Industrial environments are full of small signals that can precede serious incidents. A worker entering a hazardous area without PPE, smoke forming near equipment, or a vehicle operating too close to pedestrians can all escalate quickly.

Our platform helps organizations detect these situations in real time and act before they become accidents. This supports a shift from reactive reporting to proactive prevention.

For companies already investing in safety training and digital transformation, this creates a powerful bridge between learning and operations: not only can teams train for safer behavior, but the environment itself can help reinforce it. OneBonsai already emphasizes safety training, hazard spotting, and the use of AI with digital twins for monitoring and maintenance.

2. Smarter logistics

In logistics, speed and safety must go hand in hand. Warehouses, yards, and distribution centers are dynamic spaces where people, vehicles, materials, and time-sensitive processes constantly interact.

Multimodal AI can detect unsafe crossings, congestion risks, unusual motion patterns, or operational disruptions before they affect throughput. It can also help monitor towing operations, loading zones, and restricted areas where even a small mistake can lead to delays or injuries.

This builds on OneBonsai’s experience with scalable logistics solutions, where virtual twins and scalable training helped reduce instructor time and improve operational readiness.

3. Improved public safety

Public infrastructure requires constant vigilance, especially in locations where a moment of inattention can have severe consequences.

A strong example is railway safety. If a person crosses a train crossing when prohibited, every second matters. The same applies to intrusion in restricted transit zones, suspicious activity, or early warning signs of incidents in public environments.

By combining video and sound, the system can recognize hazardous events faster and provide immediate alerts to operators, authorities, or connected systems. It can also support use cases such as theft detection, abnormal crowd behavior, and emergency escalation.

4. Better situational awareness for high-risk environments

In defense, critical infrastructure, and other high-risk environments, human operators often work under pressure and with incomplete information.

A multimodal AI layer can enhance situational awareness by continuously interpreting what is happening across a site and flagging relevant events in real time. That means fewer blind spots, faster response, and better coordination between human teams and digital systems.

This becomes especially valuable in scenarios involving airspace monitoring and drone activity. The platform can support drone recognition by detecting and classifying unmanned aerial systems through visual and audio signals, helping operators distinguish between expected and suspicious aerial behavior. In more advanced security setups, these detections can also feed into counter-drone systems, enabling faster escalation, threat assessment, and protective action around sensitive sites.

For OneBonsai, this is a natural evolution of building technology that supports people in difficult, expensive, or dangerous contexts. The company already frames emerging technology as most valuable when it helps solve big, costly, or risky challenges in real-world settings.

5. Scaling innovation faster

One of the biggest hurdles in DeepTech is the isolated pilot problem: a solution works in one room, one camera setup, or one site, but fails to scale across a broader organization.

The strength of a foundational multimodal model is that it can generalize across environments with limited fine-tuning. That means the same core platform can support multiple sites and multiple use cases, from pedestrian safety in smart cities to equipment movement in construction or compliance monitoring in manufacturing.

This aligns closely with OneBonsai’s focus on ease of use, scalability, and platforms that can be deployed across sectors.

What happens with your data?

For many organizations, especially in industrial, public, or defense settings, data governance is just as important as model performance.

That is why deployment flexibility matters. Depending on your infrastructure, the platform can be deployed locally, hosted in a private environment, or run in the cloud. This gives organizations the freedom to choose the setup that best matches their security, latency, and compliance requirements.

For companies that want tighter control over sensitive operational data, local or on-premise deployment can help keep critical data flows inside their own environment. For teams still evaluating the right infrastructure strategy, hybrid approaches are also possible.

At OneBonsai, we do more than develop the AI platform itself. We also support the full deployment of the solution in a setup that fits your organization, whether that means on-premise, cloud, hybrid, or fully managed infrastructure. Thanks to our strong relationships with HPE and NVIDIA, we can help clients deploy the platform in the infrastructure model that best fits their needs.

What’s next? From object recognition to physical intelligence

Today, the platform works with visual and audio input and produces structured outputs such as warnings, classifications, and text-based alerts. Those outputs can already be connected to practical effectors like alarms, dashboards, calls, or messages to employees.

But this is only the beginning.

Once a system can reliably interpret physical reality, the next step is to connect that understanding to automated response. In other words, the platform evolves from object recognition into physical intelligence.

Imagine an incident at a train crossing. Instead of only notifying local authorities after detection, the system could immediately trigger a connected safety protocol. That might mean activating barriers, warning nearby operators, or sending a signal into a broader control system to reduce response time.

This is where multimodal AI becomes more than perception. It becomes an intelligent operational layer between the physical world and the systems designed to protect it.

And when combined with digital twins, simulation environments, and training systems, that loop becomes even more powerful: detect, predict, train, improve, repeat. OneBonsai’s own digital twin positioning already describes AI-enhanced twins as proactive tools for smart analysis, prediction, optimization, and automated decision support.

Final thought

The future of safety is not just better reporting. It is earlier detection, faster intervention, and smarter systems that help people make the right decision at the right time - powered by OneBonsai’s technologies.