Tencent’s Hunyuan World2 explores a new direction in AI-generated 3D environments by creating navigable spaces instead of static 2D outputs. This article looks at how the system performs in a real-time workflow context, where it shows strong potential for early-stage layout, atmosphere, and environment ideation.

Testing Tencent’s Hunyuan World2 in a Real-Time Workflow Context

Tencent’s Hunyuan World2 is part of a growing wave of AI systems trying to move beyond 2D generation into fully navigable 3D environments. Instead of producing a single image or short video, it generates spaces you can move through.

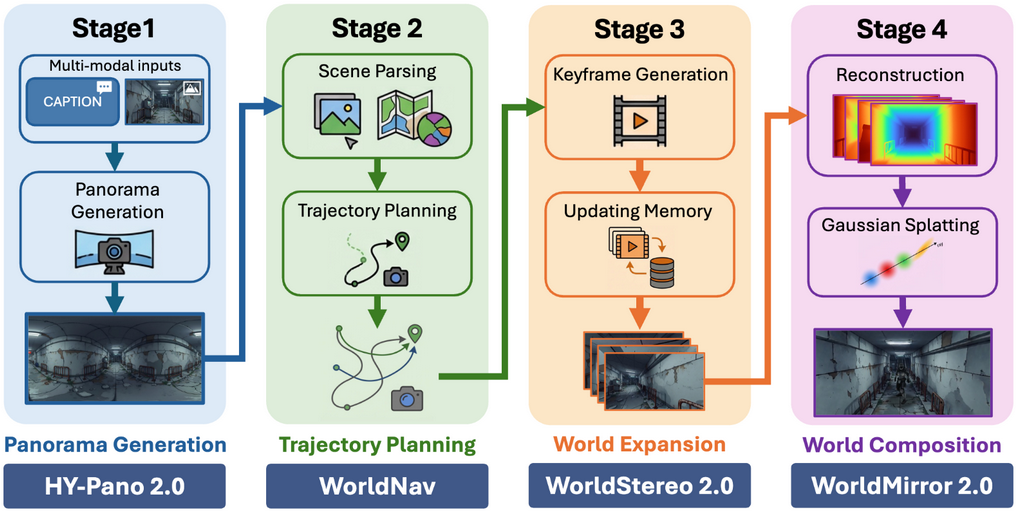

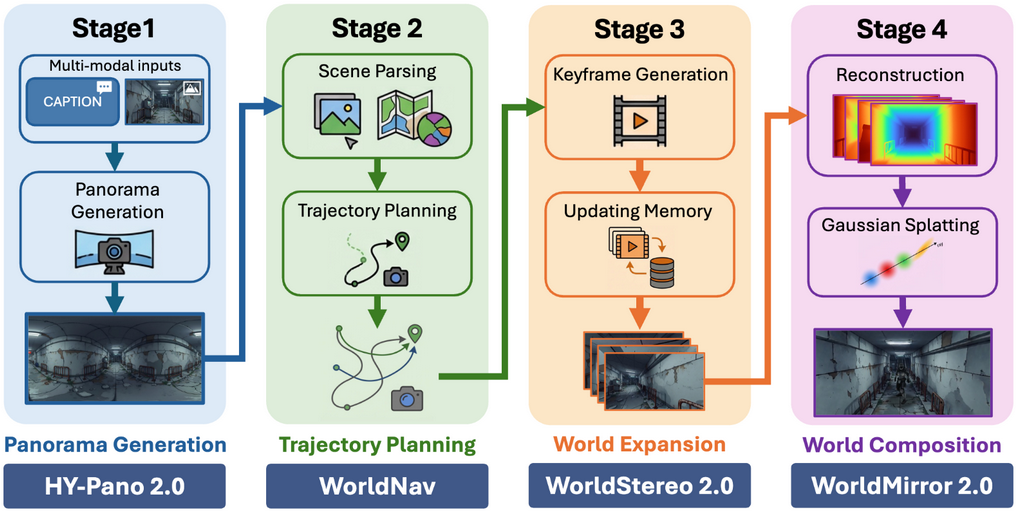

From a development perspective, this immediately stands out. In testing, the system can take relatively simple prompts and turning them into explorable scenes with a sense of scale, layout, and atmosphere. The system is built as a four-stage pipeline: panorama generation, path planning, scene expansion, and composition, that outputs high-fidelity, navigable 3D worlds.

What Makes It Interesting

What makes this interesting is the use of Gaussian splatting. Instead of generating clean meshes with topology, the environment is built from dense point-based representations (“splats”) that store colour, spatial information and support real-time lighting. This allows for very fast reconstruction of complex scenes, especially when dealing with noisy, organic detail. This results in environments that feel visually rich without relying on traditional geometry.

Collision and Traversal

Normally, this kind of representation comes with a major limitation: it’s visual, not physical. You’d expect something like this to behave more like a volumetric render than a usable level. However, World2 goes a step further. The system was able to generate approximated collision and navigable paths on top of the splat-based environment. You can walk through the space in a way that feels grounded, with the AI inferring where surfaces should be solid and where traversal should be possible.

The experience resembles walking through a rough blockout level with collision already in place. It’s not perfectly accurate, and it wouldn’t replace proper collision setup or navmesh generation, but it’s functional enough to explore layout, scale, and flow.

Current Limitations

That said, the limitations are still clear. The lack of clean meshes means you can’t directly integrate these environments into a production pipeline. There’s no topology, UVs, or structured assets to work with. Thus, control is still high-level.

Where It Becomes Useful

Where this becomes useful is in early-stage development. For environment artists and level designers, tools like this could accelerate:

- Blockout ideation

- Composition and layout testing

- Mood and atmosphere exploration

Conclusion

Hunyuan World2 doesn’t replace traditional tools, but it complements them in an interesting way. By combining splat-based rendering, real-time lighting, and inferred collision/traversal, it starts to bridge the gap between visual generation and spatial design.

It’s still early, but as a concepting and exploration tool, it already hints at a future where building and testing 3D worlds becomes significantly faster and much more iterative.

About the Authors

About Briana Marinescu

Technical Art Intern exploring the use of AI in real-time art pipelines. Focused on bridging art and technology through automation and procedural workflows.