Classic computer vision tells you what it sees; a Multimodal Vision-Agent AI platform helps you decide what to do about it. Learn how extending traditional detection with context, risk verification, and agentic workflows turns raw video into actionable operational intelligence for complex environments like rail, logistics, and manufacturing.

From Multimodal Awareness to a Multimodal Vision-Agent AI Platform

In our earlier article, we explored how multimodal AI can improve safety and efficiency by combining video, audio, and contextual signals to detect unsafe situations earlier and support faster intervention. That was the first step in the story: moving from isolated object recognition toward broader situational awareness.

The next step is turning that awareness into something operationally useful.

In complex environments such as rail infrastructure, warehouses, industrial sites, logistics hubs, and public spaces, the real challenge is rarely just seeing an event. It is understanding whether the event matters, how urgent it is, what evidence supports it, and what should happen next.

That is where a Multimodal Vision-Agent AI platform comes in.

Rather than stopping at detection, this kind of platform combines real-time perception with context, verification, search, summarization, and reporting. This mirrors the broader direction of the market, where modern video AI systems are increasingly being designed not only to analyze footage, but also to support agentic workflows such as search, question answering, reporting, and alert verification.

For OneBonsai, this is a natural continuation of the same direction we already described earlier: using AI not only to observe the physical world, but to help organizations respond to it more intelligently.

From perception to operational intelligence

Traditional computer vision systems are often designed to answer narrow questions. Is there a person in frame? Did something cross a line? Is a vehicle present in a zone? These capabilities remain useful, especially when speed and reliability are critical.

But real operational teams need more than a binary signal.

A rail operator wants to know whether a crossing event is truly dangerous or simply unusual. A warehouse supervisor wants to know whether a person and a vehicle were merely nearby or actually on a collision path. A city operator needs to understand whether an alert should be ignored, reviewed, or escalated immediately. In these situations, raw detections are only the beginning.

A Multimodal Vision-Agent AI platform extends perception into operational intelligence. It combines fast scene understanding with contextual reasoning, evidence retrieval, and clear outputs that humans can actually use.

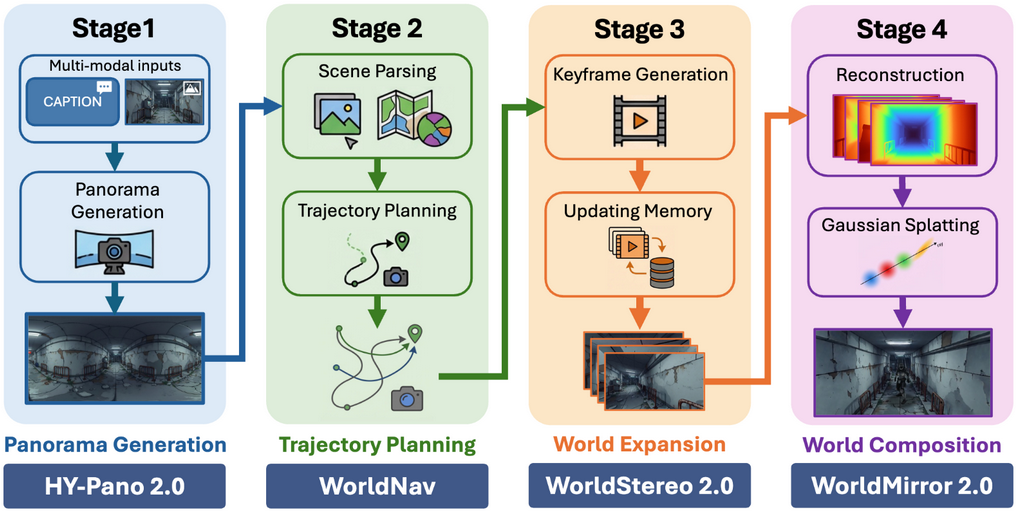

A layered way to understand the system

To keep the architecture understandable without exposing internal implementation details, it helps to describe it as a layered operational pipeline:

- Multimodal sensing

- Real-time scene understanding

- Event and context extraction

- Risk verification

- Search, summarization, and reporting

- Operational response interfaces

This framing is simple for business stakeholders, but it also reflects how modern vision-agent systems are increasingly structured: real-time intelligence at the front, analytics in the middle, and agent workflows on top for reporting, question answering, and search.

Why this matters beyond detection

The difference between a classic vision pipeline and a vision-agent workflow is not just technical. It is operational.

A classic computer vision system can tell you what was likely seen. A Multimodal Vision-Agent AI platform helps determine what that event means in context.

That distinction matters because many environments generate more video and more alerts than any human team can realistically process. In current vision-agent architectures, one layer generates candidate events, while another layer verifies those events against the relevant footage and adds reasoning before publishing a verdict downstream. The goal is not to produce more alerts. The goal is to produce better ones.

This is especially valuable in settings where false positives are expensive, manual review is slow, and response quality matters as much as response speed.

From monitoring to operational memory

Another important shift is that modern video AI is no longer only about what happens live. It is also about what can be found later.

A Multimodal Vision-Agent AI platform turns video into operational memory. Instead of asking a person to scrub through hours of footage, a team can search for a relevant event, retrieve the right clip, summarize what happened, and generate a report with much less manual effort.

That is where the “vision-agent” part becomes especially important. These agents do not just look at a frame. They help orchestrate workflows around video understanding, search, summarization, and reporting. Current blueprint-style systems in the market increasingly support exactly these capabilities, including video retrieval, action recognition, summarization, Q&A, and alert workflows.

In industrial environments, public infrastructure, and logistics operations, that has a direct impact on investigation time, reporting burden, and decision quality.

Human-in-the-loop remains essential

The point of these systems is not to remove people from the process. It is to make human expertise more effective.

In safety-critical environments, operators still need to make judgments, escalate incidents, and interpret edge cases. What a Multimodal Vision-Agent AI platform can do is reduce noise, add context, and surface the highest-priority events first which increase the overall reaction speed. It can also provide summaries and evidence in a format that makes the human decision easier and faster.

That balance between automation and oversight is one reason this model is so compelling in enterprise settings. It supports the operator rather than overwhelming them.

Where the value is most immediate

The opportunity is strongest in environments with four common characteristics:

- many cameras or sensor streams

- high manual review cost

- operational risk if alerts are missed or misunderstood

- a clear team that owns response

That includes rail safety, warehouse operations, industrial monitoring, smart-city operations, logistics hubs, and other critical infrastructure contexts.

These are exactly the kinds of environments where broader vision-agent architectures are now being positioned: not as isolated model demos, but as operational systems that connect perception, analytics, and agentic decision support.

Why this is better than classic machine learning alone

Classic vision systems are still useful, and they remain an important part of the stack. But on their own, they are often limited by rules, fixed attributes, and the need for manual interpretation.

A Multimodal Vision-Agent AI platform adds:

- broader contextual understanding

- verification of candidate incidents

- natural-language explanations

- search across stored video

- automated summarization and reporting

- smoother integration into operational workflows

That does not replace classic CV. It builds on top of it.

In practical terms, a fast perception layer can identify candidate risks, while a reasoning and retrieval layer helps verify them, explain them, and connect them to the right next step. That layered structure is increasingly reflected in modern vision-agent reference architectures across the market.

A bridge to digital twins and continuous improvement

This is also where the connection to digital twins becomes especially important.

In the earlier article, we described a loop of detect, predict, train, improve, repeat. A Multimodal Vision-Agent AI platform makes that loop more concrete. Once an environment can be observed, interpreted, and logged in structured form, those outputs can feed simulation, training, synthetic data generation, and system refinement.

That broader direction fits closely with OneBonsai’s positioning around AI, simulation, and digital twins in real operational environments.

For organizations, that means the value is not just real-time awareness. It is the ability to improve the full operational loop over time.

A simple way to explain it

The easiest way to describe the progression is this:

Multimodal AI helps a system understand what is happening. A Multimodal Vision-Agent AI platform helps an organization decide what to do about it.

That is the clearest bridge between the earlier article and this one.

The first step was making environments more observable. The next step is making them more explainable, searchable, and actionable.

Final thought

The future of operational safety is not just better detection. It is better judgment at scale.

As camera and sensor infrastructure continues to grow, organizations will need more than dashboards full of events and alerts. They will need systems that can interpret, verify, summarize, and support response in ways that are usable in real operations.

That is why the shift from multimodal awareness to a Multimodal Vision-Agent AI platform matters.

It is not a break from the previous story. It is the continuation of it.